A/B Testing UX - An Introduction

In the ever-evolving realm of digital experiences, crafting a user-friendly interface that resonates with your audience is a constant pursuit. That's where UX A/B testing steps into the spotlight. User experience A/B testing is a data-driven journey that empowers UX designers to fine-tune and perfect their creations.

In this blog, we'll delve into the intricacies of A/B testing, exploring how it works, its advantages, and potential pitfalls. From defining objectives to analysing results, you'll discover the secrets to optimising your digital products and websites, all while enhancing user engagement and satisfaction.

What is UX?

UX stands for User Experience. It refers to a person's overall experience when interacting with a product, service, system, or interface. UX design is a multidisciplinary field encompassing various aspects of designing and improving the user's interaction with these entities to make them more user-friendly, efficient, and enjoyable. UX is an iterative process for UX designers to continually improve products or services.

Key components of UX design include:

- Usability: Ensuring a product or system is easy to use and navigate, with intuitive and efficient user interfaces.

- Accessibility: Making sure that the product or service can be used by people with disabilities or those who use assistive technologies.

- Usefulness: Ensuring the product or service effectively solves a real problem or meets the user's needs.

- Desirability: Making the product visually appealing and engaging to users, creating a positive emotional response.

- Credibility: Building trust and confidence in the product or service through transparent information, security, and reliability.

- Performance: Ensuring that the product or system operates quickly and efficiently, minimising loading times and errors.

- User Research: Researching to understand user behaviour, needs, and preferences which helps inform design decisions.

- Information Architecture: Organising content and information in a logical and user-friendly manner, making it easy for users to find what they need.

- Interaction Design: Defining how users will interact with the product or service, including the layout of buttons, menus, and other interactive elements.

- User Testing: Collecting feedback from real users to identify issues and opportunities for improvement.

UX design is crucial in today's digital age because it directly impacts how users perceive and engage with products and services. A positive user experience can lead to increased user satisfaction, customer loyalty, and the success of a product or business. Conversely, a poor user experience can result in frustration, abandonment, and negative reviews, harming a product's reputation and business performance.

What is A/B Testing?

UX A/B testing is a variant of traditional A/B testing that specifically focuses on improving the user experience of a website, application, or digital product. It involves comparing two or more versions of a user interface or specific design elements to determine which provides a better user experience and achieves straightforward usability or engagement goals.

A/B testing works by splitting the user group into two, equally and unbiased. Then, an element is tested, and Group A will see a different element from Group B. UX designers can analyse metrics and compare the data to their original goals. From there, UX designers can make informed decisions or decide whether more testing is required.

UX A/B testing is a valuable tool for UX designers and product teams to make data-driven decisions that lead to better user experiences. It helps identify which design choices resonate with users and can lead to increased engagement, satisfaction, and conversions, ultimately improving the overall success of a digital product.

How is A/B Testing Used for UX?

A/B testing is used in UX to improve the design and functionality of websites, applications, and digital products. Here are some common use cases for A/B testing in UX:

UI Design Optimisation

A/B testing can be used to compare different user interface (UI) designs, such as layouts, colour schemes, typography, and button styles, to determine which design elements provide a better user experience and lead to higher user engagement.

Content Placement

It helps determine the most effective content placement on a webpage or within an app, including text, images, and multimedia elements. This can optimise user engagement and information consumption.

Navigation and Information Architecture

A/B testing can assess different navigation structures, menu layouts, and information hierarchies to ensure that users can easily find the content they are looking for and navigate the product intuitively.

Call-to-Action (CTA) Buttons

A/B tests can compare variations of CTA buttons, such as different wording, colours, sizes, and placements, to identify which ones drive more conversions and user interactions.

Form and Input Field Design

UX A/B testing can be used to refine the design of forms and input fields, including field labels, input validation messages, and error handling, to improve the usability and completion rates of forms.

Onboarding and User Flows

It helps optimise the onboarding process and user flows within a product by testing different sequences of screens, tooltips, tutorials, and user guidance features.

Personalisation

A/B testing can be used to test personalised content recommendations, product recommendations, or tailored user experiences to determine which versions lead to increased user engagement and satisfaction.

Mobile Responsiveness

It is used to ensure that mobile-responsive designs provide an optimal user experience on various devices and screen sizes.

Load Times and Performance

A/B testing can assess the impact of performance optimisations, such as reducing page load times or minimising errors, on user satisfaction and engagement.

Feature Testing

When introducing new features or functionality, A/B testing helps evaluate their impact on user behaviour and satisfaction, allowing for adjustments or improvements.

Accessibility Testing

It can be used to test accessibility features and improvements to ensure that the product is usable by people with disabilities and complies with accessibility standards.

Localisation and Language

A/B testing can assess language translations, localised content, or region-specific variations to determine which versions resonate best with users in different markets.

A/B testing in UX is a valuable tool for making data-driven design decisions and continually improving the user experience. It allows UX designers and product teams to test hypotheses, validate design choices, and optimise digital products to better meet users' needs and preferences, ultimately leading to increased user satisfaction and improved business outcomes.

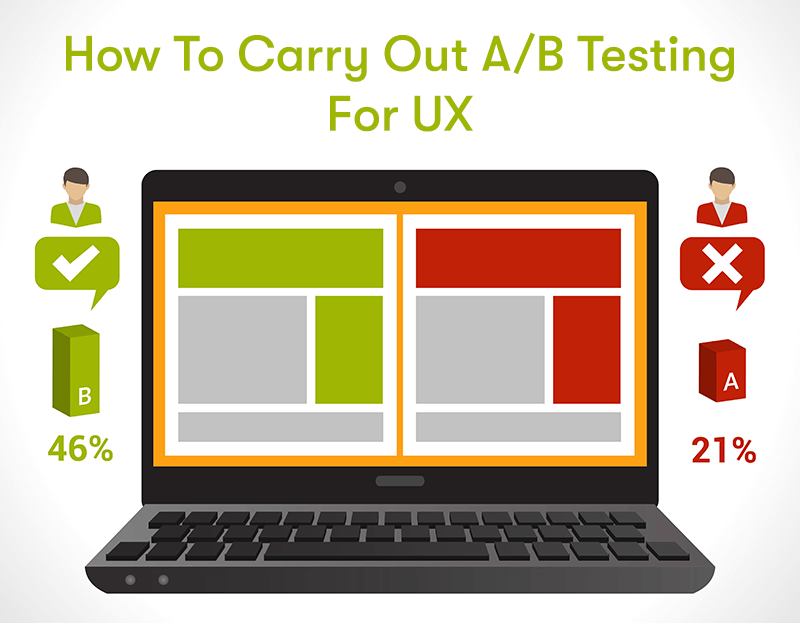

How to Carry Out A/B Testing for UX

Carrying out A/B testing for UX involves systematically comparing two or more design variations and determining which one provides a better user experience. Here's a step-by-step guide on how to conduct A/B testing for UX:

Define Your Goals and Metrics

Clearly define the objectives of your A/B test. What specific aspect of the user experience are you trying to improve (e.g., click-through rates, conversion rates, user engagement)? Also, Identify the key performance metrics you will use to measure success.

Select the Element to Test

Choose a specific element or feature of your website, app, or digital product that you want to test. This could be a button, a headline, an image, a form, or any other UX component.

Generate Hypotheses

Formulate hypotheses about how changing the selected element could impact the user experience. For example, "Changing the colour of the 'Buy Now' button to green will increase click-through rates."

Create Variants

Develop two or more versions (variants) of the element you are testing. One version (the control) should remain unchanged, while the other version(s) incorporate the proposed changes based on your hypotheses.

Randomly Assign Users

Use random assignment to ensure that users are exposed to the different variants. This helps eliminate bias and provides a fair comparison.

Implement the A/B Test

Deploy the A/B test using a testing platform or software that allows you to serve the different variants to users in a controlled manner. Make sure that your sample size is statistically significant for reliable results.

Collect Data

Gather data on user interactions and behaviours for each variant. This may involve tracking metrics like click-through rates, conversion rates, bounce rates, session duration, and user feedback.

Analyse the Results

Conduct statistical analysis to determine which variant performs better regarding your chosen metrics. There are various statistical methods to compare the results, such as t-tests or chi-squared tests.

Draw Conclusions

Based on the analysis, determine which variant provides a superior user experience. Does it significantly outperform the control? Is it statistically significant?

Make Informed Decisions

Use the insights gained from the A/B test to make informed design decisions. If the variant outperforms the control, consider implementing the changes permanently.

Document Findings

Keep detailed records of the A/B test setup, results, and any insights gained. This documentation can be valuable for future reference and sharing findings with your team.

Iterate and Repeat

A/B testing should be an ongoing process for continuous UX improvement. Based on the results, iterate on your designs and conduct further tests to refine the user experience.

Ethical Considerations

Ensure that your A/B testing process adheres to ethical guidelines. Avoid deceptive practices, and consider user privacy and consent when collecting data.

User Feedback

Consider incorporating qualitative data, such as user feedback and usability testing, alongside A/B testing to gain a deeper understanding of the user experience.

Communication

Share the results and insights from your A/B tests with your team, stakeholders, and relevant departments, as they can provide valuable input and help with decision-making.

Remember that A/B testing is most effective when it aligns with your overall UX strategy and is used as a tool to continually enhance the user experience based on empirical data and user feedback.

Analysing Data from UX

Analysing data from UX A/B testing is a crucial step in determining which design or user experience variation performs better. Here's a guide on how to analyse the data effectively:

Define Key Metrics

Start by reviewing the key metrics and goals you established before conducting the A/B test. These metrics should align with the specific aspect of the user experience you were testing (e.g., conversion rates, click-through rates, bounce rates, session duration, and user satisfaction scores).

Organise Data

Collect and organise data for both the control and variant groups. Ensure that the data is clean, accurate, and properly labelled. Use spreadsheets or data analysis tools to manage your data.

Calculate Statistical Significance

To determine if the differences between the control and variant are statistically significant, you can use statistical tests such as t-tests, chi-squared tests, or regression analysis, depending on the type of data and the specific metric you are analysing.

Graphical Visualisation

Create visualisations such as line charts, bar graphs, or histograms to illustrate the differences between the control and variant. Visual representations can make it easier to interpret the data.

Analyse Conversion Rates

If you are testing conversion rates (e.g., sign-ups, purchases, downloads), calculate the conversion rate for both the control and variant groups. Determine if there is a statistically significant difference in conversion rates.

Analyse Engagement Metrics

For engagement-focused metrics (e.g., session duration, pageviews per session), compare the averages or distributions between the control and variant groups. Identify any significant differences.

Check for Segmentation

Segment the data to explore whether different user groups (e.g., new users vs. returning users, different demographic segments) exhibit other behaviours in response to the variations. This can help you understand if the impact of the change is consistent across different user segments.

Time-Based Analysis

Analyse the data over time to see if the impact of the variation is consistent or if some trends or patterns emerge over days or weeks.

Statistical Confidence

Calculate confidence intervals to understand the range of values within which the true effect of the variation lies. A narrower confidence interval indicates more precise results.

Consider Practical Significance

While statistical significance is important, also consider whether the observed differences are practically significant. Sometimes, a slight statistically significant difference may not have a meaningful impact on the user experience or business goals.

Qualitative Insights

Combine quantitative data with qualitative insights from user feedback, usability testing, and user interviews. Qualitative data can provide context and explanations for the quantitative findings.

Iteration and Further Testing

Based on the analysis, decide whether to implement the changes permanently, revert to the original design, or conduct further testing with refined variations. A/B testing is often an iterative process.

Documentation

Document your analysis process, including the statistical tests, results, conclusions, and any design or UX improvement recommendations.

Communicate Findings

Share the results and insights with your team, stakeholders, and relevant departments. Effective communication ensures that everyone is informed and can contribute to decision-making.

Continuous Improvement

Remember that UX A/B testing is a continuous process for improving the user experience. Use the insights from your analysis to inform future design decisions and iterations.

Analysing data from UX A/B testing requires a combination of statistical rigour and a deep understanding of the user experience. It's essential to approach the analysis with a clear focus on the goals and metrics that matter most for the specific UX element you are testing.

Single Variant vs Multivariant Testing

Single variant testing and multivariant testing (also known as A/B testing and A/B/C testing, respectively) are two common approaches to experimentation and optimisation in various fields, including marketing, product development, and UX. They differ in terms of the number of variations being tested simultaneously. Here's an overview of both approaches:

Single Variant Testing (A/B Testing)

A/B Testing: In single variant testing, you compare two versions of something, typically referred to as A and B. One version (A) is the control, while the other (B) is a variant with a specific change or modification.

Simplicity: A/B testing is straightforward and relatively simple to set up and manage. It is an excellent choice for testing one specific change or hypothesis at a time.

Clear Comparison: The primary advantage of A/B testing is that it provides a clear comparison between the control and a single variant, making it easy to determine the impact of that specific change on a metric of interest.

Faster Results: Since you are testing a single variation, A/B tests can often yield results more quickly than multivariant tests.

Lower Sample Size Requirements: A/B tests typically require a smaller sample size to achieve statistical significance because you are comparing only two variations.

Limited Scope: A/B testing is most effective when you have a specific, isolated hypothesis to test. If you have multiple changes you want to evaluate, it may require running multiple A/B tests.

Multivariant Testing

Multivariant: In multivariant testing, you compare three or more versions: A (control) and multiple variants (B, C, D, and so on). Each variant includes different changes or modifications.

Complexity: Multivariant testing is more complex to set up and manage compared to A/B testing. It requires careful planning and tracking of multiple variations simultaneously.

Comprehensive Testing: Multivariant testing allows you to test the impact of multiple changes at once. This can be useful when you want to understand how different combinations of changes affect user behaviour or metrics.

Efficiency: It can be more efficient than running separate A/B tests for each individual change, especially when changes are interrelated and need to be tested together.

Longer Duration: Multivariant tests often require a longer duration to collect sufficient data, especially when testing a more significant number of variations.

Higher Sample Size Requirements: Because you are testing multiple variations, multivariant tests generally require a larger sample size to achieve statistical significance for each variant.

Choosing Between Single Variant and Multivariant Testing

The choice between single variant and multivariant testing depends on your specific goals, resources, and the complexity of the changes you want to test:

Use A/B testing (single variant) when you have a single, well-defined hypothesis or change you want to test quickly and with a smaller sample size.

Use multivariant testing (A/B/C testing or beyond) to test the combined impact of multiple changes, especially when these changes are interconnected, and you have the necessary resources and time to manage a more complex experiment.

Ultimately, the choice should be guided by your research objectives and the granularity required to understand how changes affect user behaviour and metrics in your particular context.

Pros and Cons of A/B Testing for UX

A/B testing is a valuable method for improving the user experience (UX) of websites, applications, and digital products. However, like any approach, it has its pros and cons. Here's a breakdown:

Pros of A/B Testing for UX:

Data-Driven Decision-Making: A/B testing relies on empirical data, providing objective insights into user behaviour and preferences allowing for informed design decisions.

Identifies Effective Changes: A/B testing helps determine which design or UX changes lead to improvements in key metrics, such as conversion rates, engagement, and user satisfaction.

Quantifiable Results: It provides measurable and quantifiable results, making it easy to assess the impact of changes on the user experience and track progress over time.

Efficiency: A/B tests are relatively quick to set up and can yield results within a reasonable timeframe, allowing for rapid iteration and optimisation.

Minimises Risk: By testing changes with a subset of users, A/B testing minimises the risk of making large-scale, unproven design changes that could negatively impact the entire user base.

Objective Insights: A/B testing reduces biases and subjectivity in decision-making by relying on user data rather than individual opinions or assumptions.

Cons of A/B Testing for UX:

Limited Scope: A/B testing is most effective for isolated changes or hypotheses. It may not be suitable for testing complex, interdependent design changes or holistic UX improvements.

Resource-Intensive: Running A/B tests requires time, resources, and technical infrastructure for setup, data collection, and analysis. Small teams with limited resources may find it challenging to implement.

Potential for False Negatives: A/B testing may not always detect subtle but meaningful changes in user behaviour, leading to false negatives if the sample size is too small or the test duration is too short.

Narrow Focus: A/B testing tends to focus on quantitative data, which may not capture the full range of user emotions, motivations, or qualitative insights that can inform UX improvements.

User Fatigue: Repeated A/B testing with users can lead to user fatigue, annoyance, or a diminished overall experience if not managed carefully.

Ethical Considerations: A/B testing must be conducted ethically and with user consent. Deceptive practices or tests that negatively impact users without their knowledge are unethical.

Limitations of Metrics: The choice of metrics for A/B testing is crucial. Focusing solely on one metric may lead to unintended consequences in other aspects of the user experience.

Interpretation Complexity: Interpreting A/B test results can be challenging, particularly when dealing with complex user behaviours or interactions that involve multiple variables.

In summary, A/B testing is a valuable tool for UX improvement when used appropriately. It provides data-driven insights and helps identify effective design changes. However, it should be complemented with other research methods, such as user interviews, usability testing, and qualitative analysis, to gain a holistic understanding of the user experience and address its limitations.

Where Can You Learn More About UX Design?

Our BCS Foundation Certificate In User Experience training course is perfect for anyone who wants to increase their knowledge of User Experience. The BCS User Experience course will teach you the UX methodology, best practices, techniques, and a strategy for creating a successful user experience. The course will cover the following topics:

- Guiding Principles

- User Research

- Illustrating The Context Of Use

- Measuring Usability

- Information Architecture

- Interaction Design

- Visual Design

- User Interface Prototyping

- Usability Evaluation

Click the button below to find out more.

Final Notes On A/B Testing UX

A/B testing offers UX designers a data-driven compass to navigate the intricate landscape of user preferences. Its pros are evident as a scientific approach to optimising UX, objectively identifying effective design changes, and quantifiable results. Yet, as with any method, A/B testing has its limitations, especially in addressing holistic UX improvements and ethical considerations.

In closing, it's crucial to remember that A/B testing is a valuable piece of the UX puzzle but not the whole picture. When integrated thoughtfully into the UX design process, it becomes a powerful ally in pursuing user-centric excellence.